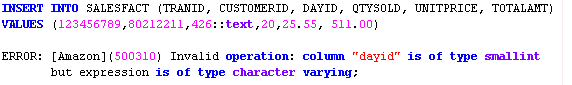

In this section, we demonstrate the utility of FILLRECORD by using a Parquet file that has a smaller number of fields populated than the number of columns in the target Amazon Redshift table. Use FILLRECORD while loading Parquet data from Amazon S3 NULL substitution only works if the column definition allows NULLs. To load NULLs to VARCHAR columns from text and CSV, specify the EMPTYASNULL keyword. For text and CSV formats, if the missing column is a VARCHAR column, zero-length strings are loaded instead of NULLs. With the FILLRECORD parameter, missing columns are loaded as NULLs. The FILLRECORD parameter addresses ease of use because you can now directly use the COPY command to load columnar files with varying fields into Amazon Redshift instead of achieving the same result with multiple steps. With the FILLRECORD parameter, you can now load data files with a varying number of fields successfully in the same COPY command, as long as the target table has all columns defined. To load these files, you previously had to either preprocess the files to fill up values in the missing fields before loading the files using the COPY command, or use Amazon Redshift Spectrum to read the files from Amazon S3 and then use INSERT INTO to load data into the Amazon Redshift table. In such cases, these files may have values absent for certain newly added fields. In some situations, columnar files (such as Parquet) that are produced by applications and ingested into Amazon Redshift via COPY may have additional fields added to the files (and new columns to the target Amazon Redshift table) over time. In situations when the contiguous fields are missing at the end of some of the records for data files being loaded, COPY reports an error indicating that there is mismatch between the number of fields in the file being loaded and the number of columns in the target table. The COPY command can load data from Amazon S3 for the file formats AVRO, CSV, JSON, and TXT, and for columnar format files such as ORC and Parquet. The COPY command appends the new input data to any existing rows in the target table. You can take maximum advantage of parallel processing by splitting your data into multiple files, in cases where the files are compressed. The COPY command reads and loads data in parallel from a file or multiple files in an S3 bucket. The COPY command loads data in parallel from Amazon Simple Storage Service (Amazon S3), Amazon EMR, Amazon DynamoDB, or multiple data sources on any remote hosts accessible through a Secure Shell (SSH) connection. Overview of the COPY commandĪ best practice for loading data into Amazon Redshift is to use the COPY command. This post dives into some of the recent enhancements made to the COPY command and how to use them effectively. One of the fastest and most scalable methods is to use the COPY command.

You can use many different methods to load data into Amazon Redshift. How your data is loaded can also affect query performance. Loading very large datasets can take a long time and consume a lot of computing resources. Loading data is a key process for any analytical system, including Amazon Redshift.

Tens of thousands of customers use Amazon Redshift to process exabytes of data per day and power analytics workloads such as high-performance business intelligence (BI) reporting, dashboarding applications, data exploration, and real-time analytics. Amazon Redshift offers up to three times better price performance than any other cloud data warehouse. Thus, you need to use an IAM Role, even if the files were stored in your own AWS account.Amazon Redshift is a fast, scalable, secure, and fully managed cloud data warehouse that makes it simple and cost-effective to analyze all your data using standard SQL. This would mean using either of: CREDENTIALS 'aws_iam_role=arn:aws:iam:::role/' According to COPY from columnar data formats - Amazon Redshift, it seems that loading data from Parquet format requires use of an IAM Role rather than IAM credentials:ĬOPY command credentials must be supplied using an AWS Identity and Access Management (IAM) role as an argument for the IAM_ROLE parameter or the CREDENTIALS parameter.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed